The Weekend Learn

/

November 29, 2025

I traveled by means of airports and reported in sports activities stadiums this 12 months. At every, I used to be requested to scan my face for safety.

Advert Coverage

An AI safety digicam demo at an occasion in Las Vegas, Nevada.

(Bridget Bennett / Getty Photos)

Within the fall, my associate and I took two cross-country flights in fast succession. The potential risks of flying, exacerbated by a couple of high-profile airplane crashes earlier within the 12 months, appeared to subside within the nationwide consciousness. There have been different tragedies and failures to fret about. Nonetheless, the ordeal of flying necessitates the ordeal of passing by means of airport safety, one of many United States’s most evident, and irritating, post-9/11 bureaucratic slogs.

By the point I used to be sufficiently old to fly as an unaccompanied minor in 2003, the irrevocability of the TSA, very similar to different authorities acronyms (FBI, CIA, DHS), had develop into so firmly established as to appear everlasting. I bear in mind, in 2006, when it was introduced, after a liquid bomb risk in London, that liquids in luggage could be restricted to the scale of a 3.4 ounce container and shoe removing would develop into necessary. I bear in mind the start of TSA PreCheck, and the implementation of full physique scanners. What I don’t bear in mind is when precisely we began to be requested to scan our faces to be able to get previous the safety line.

On our first fall journey, my associate and I simply occurred to be flying on 9/11. “Occurred to” is inaccurate; we selected to fly on that date given how, in response to our logic, the lingering superstition of airplane hijackings would end in fewer folks shopping for airplane tickets, and thus presumably shorter safety traces and fewer crowded flights. Perhaps in earlier years this may have been the case. On this 12 months’s 9/11, there have been as many vacationers as there had ever gave the impression to be.

In entrance of us, a person made his strategy to the TSA agent on the safety checkpoint. The agent requested for the person’s ID then motioned for him to face in entrance of a digicam, which was embedded in a small display that displayed a cutout the place his face could be captured. As a substitute, the person requested that his {photograph} not be taken, an possibility I knew to be technically out there however one I had by no means seen a traveler truly make use of. Most individuals, together with myself, have merely acquiesced to the brand new format: The display stresses {that a} passenger might decide out by advising “the officer if you don’t want your picture taken,” but in addition emphasizes that the image, as soon as shot, is straight away deleted. Little reporting has been executed about whether or not that is true—if the picture is actually deleted and in what circumstances it will be saved. All official data comes from the TSA, which has mentioned it retains images “in uncommon cases.” As with so many applied sciences which are used for surveillance however are presently optionally available, there’s a stress to easily give in. It takes a couple of seconds. Why not?

This logic has at all times troubled me and, till this stranger modeled how easy it was to say no, I had assumed that given the way in which airport safety sometimes capabilities, particularly given the Trump administration’s blackbagging of suspected criminals and migrants off metropolis streets in broad daylight, and the invasion of privateness by police and different legislation enforcement businesses, that opting out would solely make the method slower and extra bureaucratic than it already is. However after the person in entrance of me opted out, the TSA agent simply requested for his boarding cross, scanned it, and moved on. My associate and I adopted go well with. Till the foundations inevitably change, we might by no means decide in once more.

Present Situation

Safety checkpoints have at all times been fraught for me. I had by no means thought to fret about elevated scrutiny primarily based on my ethnicity till I used to be in my teenagers, when it grew to become inconceivable to disregard how typically I’d be pulled apart for added screening at sporting occasions, in subways, and, most frequently, in airports.It wasn’t a know-how implementing that bias—it was different folks. Touring in public feels extra tenuous now, including know-how onto already current human error.

Facial recognition, certainly not a brand new idea, nonetheless has the valence of a far-off know-how, one whose use is best in principle than in apply. In 2017, sure airways like JetBlue, in collaboration with Customs and Border Safety, started trial runs of a brand new system that allowed passengers to decide on to scan their face as an alternative of their boarding passes. Instantly, issues over privateness had been raised, however the upside, in response to airline executives, was effectivity and enhanced safety. A quote by Benjamin Franklin involves thoughts, typically used when discussing privateness issues, although its authentic context is extra prosaic: “Those that would surrender important liberty to buy slightly momentary security deserve neither liberty nor security.” Franklin was referring to a taxation dispute involving the Pennsylvania Normal Meeting, not invasive know-how. Nonetheless, the sentiment on this context affords a productive perspective to have interaction with, the pressing consciousness of encroaching potential civil rights infringements and voluntary abdications of privateness.

It turns on the market’s a time period for this, “mission creep,” or, per Merriam-Webster, “the gradual broadening of the unique goals of a mission or group.” I’m compelled by the Cambridge Dictionary’s addition to the definition, “in order that the unique goal or concept begins to be misplaced.”

Goals of technological development are actually fantasies of comfort. Tech entrepreneurs whose delusions most people are pressured to witness come to fruition, body the long run in unfavorable and/or substitutional phrases, swapping out the supposedly cumbersome and analog in favor of the stripped down, digital, and environment friendly. Take Elon Musk and DOGE, or Meta and its heavy funding in wearable augmented-reality know-how. Quickly, we’ll not need to X, the tech capitalist mindset goes. Wouldn’t or not it’s wonderful in case you may simply Y?

One of many technological focuses of the previous a number of years has been pace: lowering wait occasions, shortening traces, erasing friction in day by day public life wherever doable. To enact this, clunky outdated methods have to be eradicated in favor of newer, sleeker fashions. Such upgrades, whether or not to airport safety, public college monitoring methods, private surveillance software program, or federal housing, are by no means described as something apart from a sort of refresh. However actually, each time there’s a give, there’s at all times a take.

In Could, Minneapolis’s police division, notorious the world over for the homicide of George Floyd in 2020, introduced that it was contemplating buying and selling over 2 million mugshots in its database to the tech firm Biometrica totally free use of its facial recognition program. Pushback from native officers and residents ensued, with the police division responding that it was taking public issues significantly whereas nonetheless contemplating the supply. The deal would contain Biometrica receiving knowledge from the police division in trade for software program. Alan Rubel, an affiliate professor on the College of Wisconsin–Madison finding out public well being surveillance and privateness, spoke to WPR in regards to the subject and drew consideration to the supply’s language, saying a commerce somewhat than shopping for the information could be “very helpful for that firm. We’ve collected this knowledge as a part of a public funding, in mugshots and the felony justice system, however now all that effort goes to go to coaching an AI system.”

In style

“swipe left under to view extra authors”Swipe →

Disproportionately represented races within the American felony justice system can solely imply disproportionate bias in an AI system educated to acknowledge sure sorts of faces. These with information, and people with out; authorized and undocumented immigrants. It’s troublesome, in these situations, to not parrot the identical othering language because the state, to implement a divide between “us” and “them.” I think about this can be a delicate knock-on impact of the normalization of those procedures and these surveillance applied sciences, the fixed separation between good residents and dangerous. Certainly, as Trump’s crackdown on immigrants continues to ensnare brown folks no matter authorized standing, ICE brokers have employed facial recognition instruments on their cellphones to establish folks on the road, scanning faces and irises to each collect knowledge and evaluate pictures to varied troves of location-based data.

Misplaced on this anxiousness over the potential use of biometric knowledge by non-public firms and federal businesses is how precisely that knowledge, whether or not a retina scan or fingerprint, is verified. Capturing these varied items of knowledge for the sake of surveillance is ineffective with out a database to measure towards. After all, as with the TSA, nearly all of those tech firms are working in tandem with varied branches of the federal government to examine footage and prints towards passport and Social Safety data. For now, a lot of this knowledge is separated between totally different businesses somewhat than saved in a single, unified database. However AI evangelists like tech billionaire Larry Ellison, cofounder of Oracle, envision a world the place governments home all their non-public knowledge in a single server for the needs of empowering AI to chop by means of pink tape. In February, talking by way of video name on the World Governments Summit in Dubai to former UK prime minister Tony Blair, Ellison urged any nation who hopes to benefit from “unbelievable” AI fashions to “unify all of their knowledge so it may be used and consumed by the AI mannequin. You must take all your healthcare knowledge, your diagnostic knowledge, your digital well being information, your genomic knowledge.”

Ellison’s feedback at comparable gatherings level to his assumption that, with the proliferation of AI in each digital equipment, a kind of beneficent panopticon will emerge, with AI used as a examine. “Residents shall be on their greatest conduct as a result of we’re continually watching and recording every part,” Ellison mentioned at Oracle’s monetary analyst assembly final September. How precisely it will end in something like justice isn’t specified. Rampant surveillance is already being utilized by legislation enforcement in “troubled” areas with low-income residents, excessive concentrations of black and Latino employees, and little native municipal funding. Police helicopters incessantly patrol the realm the place I dwell in Las Vegas, Nevada, flying low sufficient to shake our home windows and drown out all different sounds.

Within the final 12 months, Vegas’s metropolitan police division arrange a cell audiovisual monitoring station in a shopping mall down the road from me. It’s a neighborhood that has been steadily hollowed out by rising residence costs, a mixture of well-off white retirees and working-class black laborers with lengthy commutes into the town. Periodically, an automatic announcement performs assuring passersby that they’re being recorded for their very own security.

Whereas reporting at Las Vegas’s Sphere this previous summer season, I waited in line for a present amongst a throng of vacationers and observed, previous to passing by means of a safety checkpoint, massive screens proclaiming that facial recognition was getting used “to enhance your expertise, to assist guarantee the security and safety of our venue and our friends, and to implement our venue insurance policies.” A hyperlink directing guests to Sphere’s privateness coverage web site was displayed under the discover, however this coverage makes no direct point out of “facial recognition.” As a substitute, it outlines the seemingly incidental assortment and use of “biometric data” writ massive captured whereas one is at Sphere, or any of the properties owned by the Madison Sq. Backyard Household. The disclosure of this data could be shared with basically any third-party MSG Household deems respectable. A customer agrees to this as soon as they’ve made use of any of MSG Household’s companies, or seemingly simply stepping onto their property. The corporate has already gotten in bother for abusing this know-how. In 2022, The New York Occasions reported on an occasion of MSG Leisure’s banning a lawyer concerned in litigation towards the corporate from Radio Metropolis Music Corridor, which MSG Leisure owns. The corporate additionally used facial recognition to implement an “exclusion listing,” which included different people MSG had contentious enterprise relations with. Per Wired, “Different legal professionals banned from MSG Leisure properties, together with Madison Sq. Backyard, sued the corporate over its use of facial recognition, however the case was in the end dismissed.”

What’s so nebulous and nefarious right here is the growing lack of ability for bizarre folks to decide out of those companies, marshaled within the identify of safety and comfort, the place the query of how precisely our biometric knowledge is used can’t be readily answered. By now, most individuals know their knowledge is bought to third-party firms for the needs of, say, focused promoting. Whereas profitable, promoting is changing into a lower-tier use. The ACLU has drawn consideration to the elevated use of facial recognition in public venues like sports activities stadiums which, much like the TSA, are mentioned to be applied for the general public’s profit and safety. Using such know-how to manage entry is a large step within the flawed path. However including facial recognition to the method isn’t a matter of shoring up competence. As a substitute, it permits for the normalization of surveillance.

Bipartisan anxiousness and inquiry round this subject, particularly facial recognition in airports, has been met with opposition from airways, who declare that these strategies make for a “seamless and safe” journey expertise. However firms on the forefront of the push to combine biometric seize into the material of day by day life are exploiting momentary gaps in regulation, selecting self-limitation and textual vagaries. There’s a way these firms are merely ready to see how the federal authorities will or received’t implement pointers round facial recognition. In response to a 2024 authorities report, “There are presently no federal legal guidelines or laws that expressly authorize or restrict FRT use by the federal authorities.”

A well-known concern arises right here, as soon as ambient however rising extra visceral and palpable with every right-wing provocation towards civil rights and private autonomy. That the desires of the Trump administration dovetail with Silicon Valley’s warped imaginative and prescient of the long run solely exacerbates a tenuous state of affairs. Now we have been passing by means of a steadily altering surveillance panorama, the instruments of which have gotten tougher to disregard. The perennial query stays: What are we prepared to commerce for comfort and the phantasm of safety?

For starters, the general public have to be cautious and violations of knowledge privateness have to dwindle. Small cases of refusal, like not having your picture taken by the TSA, probably received’t snowball into radical acts—the methods during which these applied sciences are enmeshed are already large and entrenched—however they’re nonetheless among the few probabilities the general public has to decide on when their knowledge is taken. The need for privateness, somewhat than an request for forgiveness as Republicans prefer to suggest, is a treasured factor. It’s value taking a couple of minutes and a few minor irritation to protect it.

Extra from The Nation

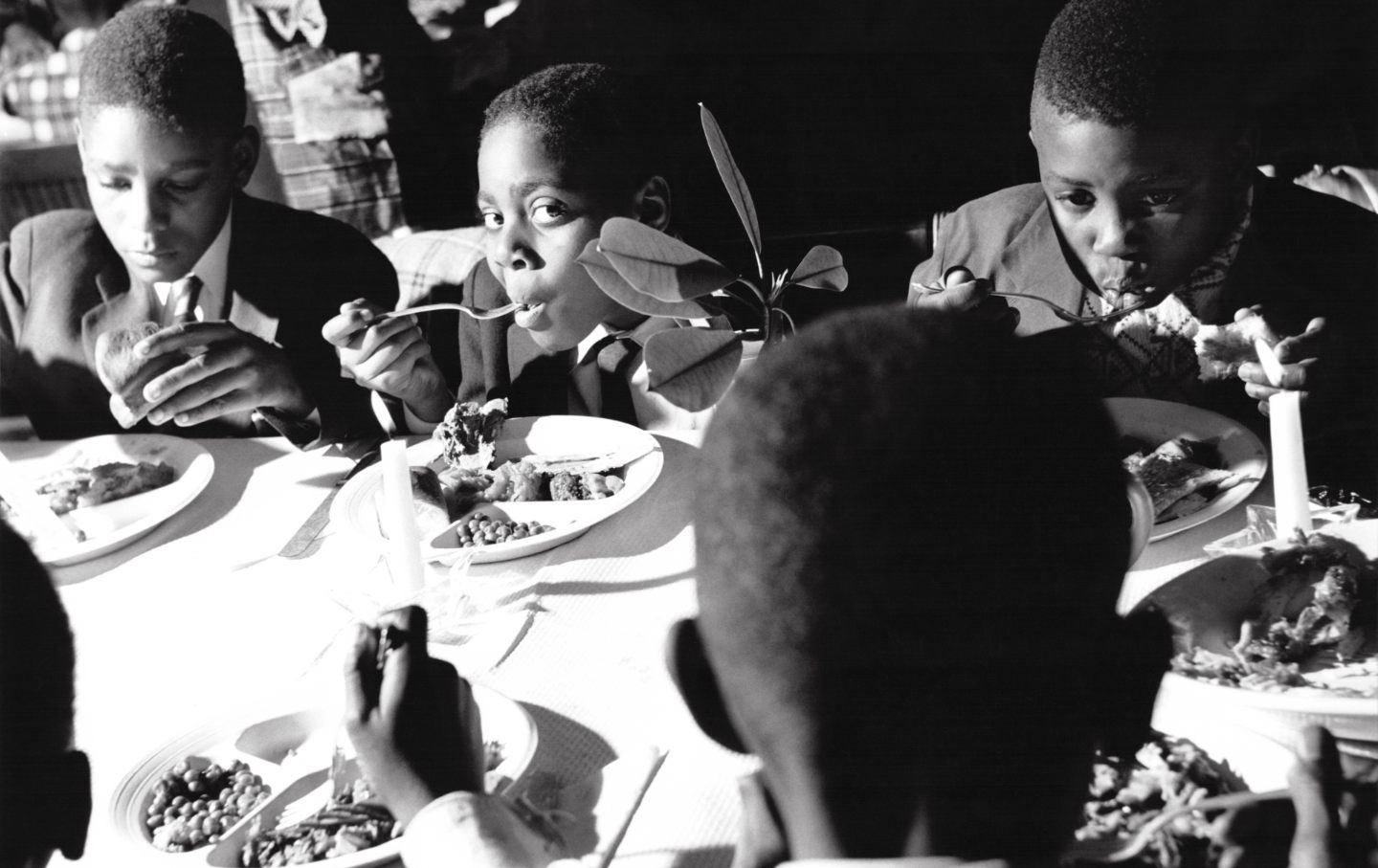

The vacation’s actual roots lie in abolition, liberation, and anti-racism. Let’s reconnect to that legacy.

Kali Holloway

The settlers who arrived in Plymouth weren’t escaping non secular persecution. They left on the Mayflower to ascertain a theocracy within the Americas.

Jane Borden

Alice had the flexibility to look to the long run and a world the place legal guidelines and attitudes didn’t preserve disabled folks poor, pitied, and remoted.

Rebecca Cokley

To start with, to be able to ask questions in regards to the younger ladies he preyed on, they’d have to see them as folks.

Katha Pollitt

Jessica Adams was barred from instructing a course on social justice for six weeks after displaying a graphic that listed MAGA and Columbus Day as types of “covert white supremacy.”

StudentNation

/

Ella Curlin

An interview with the creator of a brand new e-book documenting the brutalities of childbirth within the post-Dobbs period.

Regina Mahone